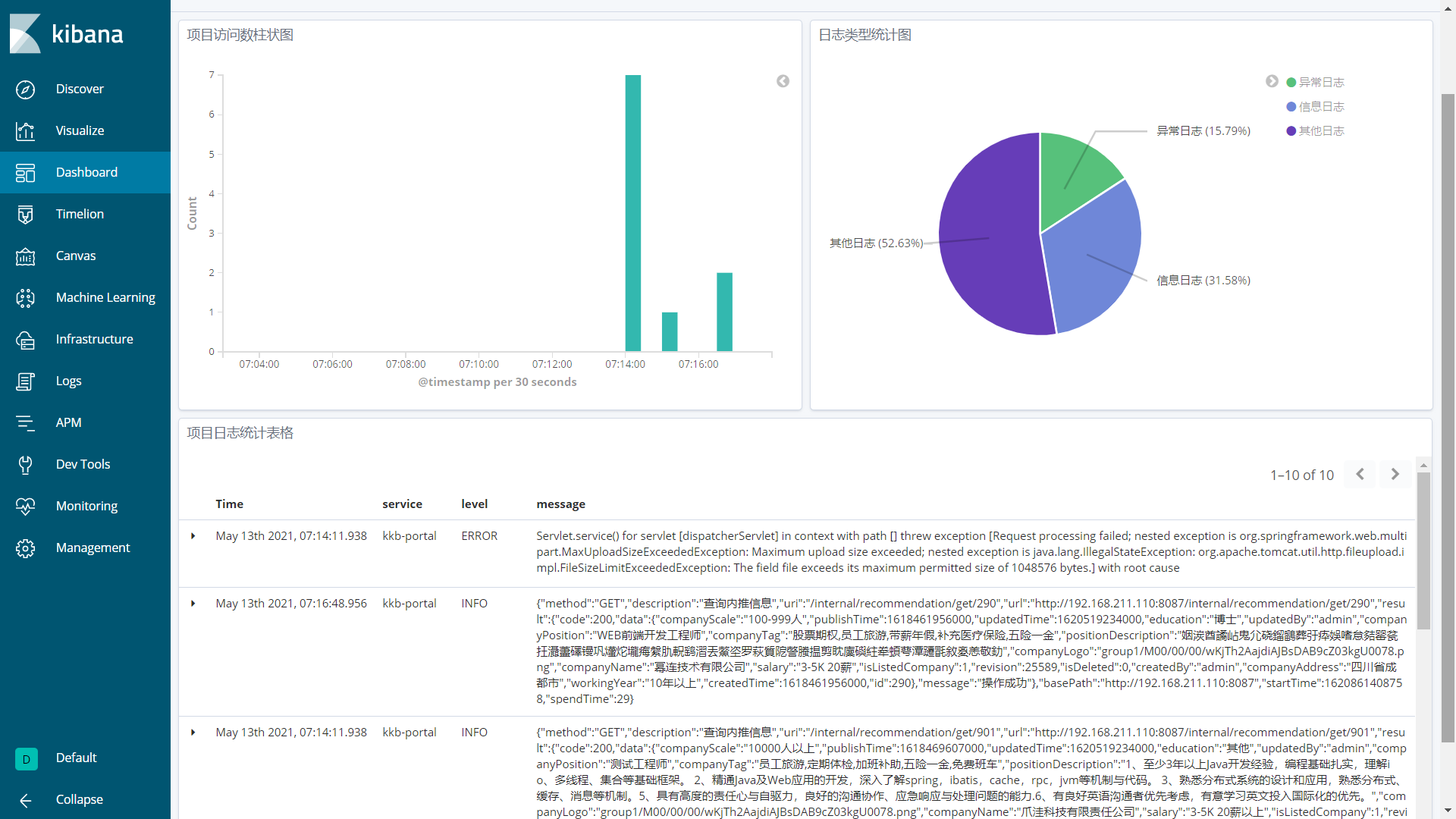

ELK日志收集系统

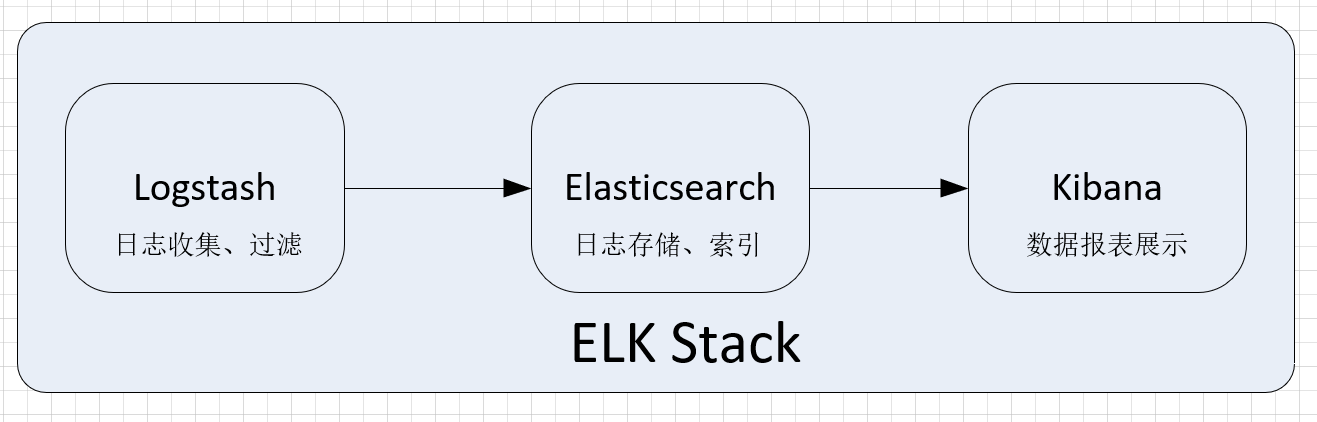

1.系统架构

1.1 系统组成

- Logstash:一款轻量级的、开源的日志收集处理框架

- 可以方便的把分散的、多样化的日志搜集起来

- 并进行自定义过滤分析处理

- 最后传输到指定的位置

- Elasticsearch:实时的分布式搜索和分析引擎

- 主要用于全文搜索,结构化搜索以及分析

- 实时搜索,实时分析

- 分布式架构、实时文件存储,并将每一个字段都编入索引

- 接口友好,支持JSON

- Kibana:开源的数据分析可视化平台

- 主要用于数据进行高效的搜索、可视化汇总和多维度分析

- 可以与Elasticsearch搜索引擎之中的数据进行交互

- 基于浏览器的界面操作可以快速创建动态仪表板

- 实时监控ElasticSearch的数据状态与更改

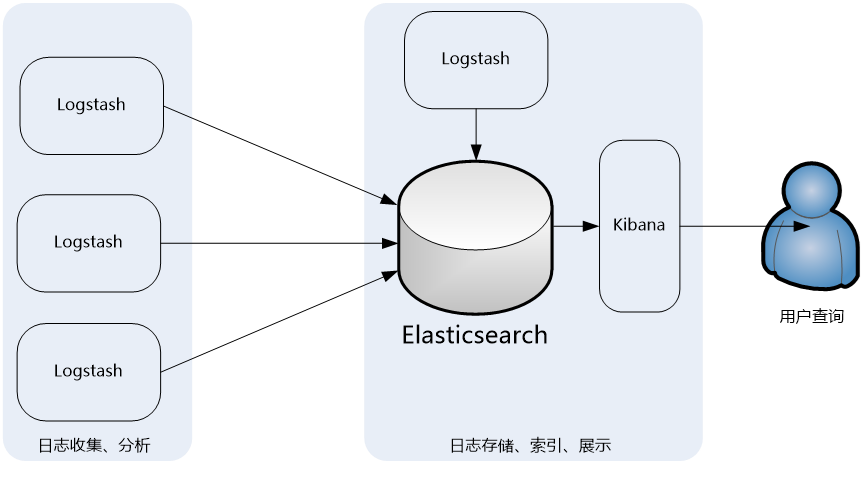

1.2 流程说明

- 日志收集处理:Logstash部署在各个节点上搜集相关日志、数据,并经过分析、过滤后发送给远端服务器上的Elasticsearch进行存储

- 日志存储检索:Elasticsearch再将数据以分片的形式压缩存储,并提供多种API供用户查询、操作

- 日志可视化分析:通过Kibana Web直观的对日志进行查询,并根据需求生成数据报表

2.系统搭建

2.1 参考资料

2.2 微服务集成Logstash

添加依赖

- pom.xml

<!-- 集成logstash -->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

</dependency>应用配置

- application.yml

logging:

level:

root: info

com.kkb: debug

file:

path: /var/logs日志配置

- logback-spring.xml

配置文件详解:

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE configuration>

<configuration>

<!--引用默认日志配置-->

<include resource="org/springframework/boot/logging/logback/defaults.xml"/>

<!--使用默认的控制台日志输出实现-->

<include resource="org/springframework/boot/logging/logback/console-appender.xml"/>

<!--从spring中获取配置应用名称-->

<springProperty scope="context" name="APP_NAME" source="spring.application.name" defaultValue="springBoot"/>

<!--从spring中获取配置日志保存路径-->

<springProperty scope="context" name="LOG_FILE_PATH" source="logging.file.path"/>

<!--DEBUG日志输出到文件-->

<appender name="FILE_DEBUG" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!--输出DEBUG以上级别日志-->

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>DEBUG</level>

</filter>

<encoder>

<!--设置为默认的文件日志格式-->

<pattern>${FILE_LOG_PATTERN}</pattern>

<charset>UTF-8</charset>

</encoder>

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!--设置文件命名格式-->

<fileNamePattern>${LOG_FILE_PATH}/debug/${APP_NAME}-%d{yyyy-MM-dd}-%i.log</fileNamePattern>

<!--设置日志文件大小,超过就重新生成文件,默认10M-->

<maxFileSize>${LOG_FILE_MAX_SIZE:-10MB}</maxFileSize>

<!--日志文件保留天数,默认30天-->

<maxHistory>${LOG_FILE_MAX_HISTORY:-30}</maxHistory>

</rollingPolicy>

</appender>

<!--ERROR日志输出到文件-->

<appender name="FILE_ERROR" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!--只输出ERROR级别的日志-->

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>ERROR</level>

<onMatch>ACCEPT</onMatch>

<onMismatch>DENY</onMismatch>

</filter>

<encoder>

<!--设置为默认的文件日志格式-->

<pattern>${FILE_LOG_PATTERN}</pattern>

<charset>UTF-8</charset>

</encoder>

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!--设置文件命名格式-->

<fileNamePattern>${LOG_FILE_PATH}/error/${APP_NAME}-%d{yyyy-MM-dd}-%i.log</fileNamePattern>

<!--设置日志文件大小,超过就重新生成文件,默认10M-->

<maxFileSize>${LOG_FILE_MAX_SIZE:-10MB}</maxFileSize>

<!--日志文件保留天数,默认30天-->

<maxHistory>${LOG_FILE_MAX_HISTORY:-90}</maxHistory>

</rollingPolicy>

</appender>

<!-- DEBUG日志格式化输出到ELK文件目录下-->

<appender name="LOG_STASH_DEBUG" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!--输出级别的日志-->

<filter class="ch.qos.logback.classic.filter.ThresholdFilter">

<level>DEBUG</level>

</filter>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>Asia/Shanghai</timeZone>

</timestamp>

<!--自定义日志输出格式-->

<pattern>

<pattern>

{

"project": "kkb-project",

"level": "%level",

"print_time": "%date{\"yyyy-MM-dd'T'HH:mm:ss,SSSZ\"}",

"service": "${APP_NAME:-}",

"pid": "${PID:-}",

"thread": "%thread",

"class": "%logger",

"message": "%message",

"stack_trace": "%exception{20}"

}

</pattern>

</pattern>

</providers>

</encoder>

<!-- 正在记录的日志文件的路径及文件名 -->

<file>${LOG_FILE_PATH}/elklogs/${APP_NAME}-debug.log</file>

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!--设置文件命名格式-->

<fileNamePattern>${LOG_FILE_PATH}/elklogs/${APP_NAME}-debug-%d{yyyy-MM-dd}-%i.log.gz</fileNamePattern>

<!--设置日志文件大小,超过就重新生成文件,默认10M-->

<maxFileSize>${LOG_FILE_MAX_SIZE:-10MB}</maxFileSize>

<!--日志文件保留天数,默认30天-->

<maxHistory>${LOG_FILE_MAX_HISTORY:-90}</maxHistory>

</rollingPolicy>

</appender>

<!-- ERROR日志输出到ELK文件目录下-->

<appender name="LOG_STASH_ERROR" class="ch.qos.logback.core.rolling.RollingFileAppender">

<!--输出级别的日志-->

<filter class="ch.qos.logback.classic.filter.LevelFilter">

<level>ERROR</level>

<onMatch>ACCEPT</onMatch>

<onMismatch>DENY</onMismatch>

</filter>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>Asia/Shanghai</timeZone>

</timestamp>

<!--自定义日志输出格式-->

<pattern>

<pattern>

{

"project": "kkb-project",

"level": "%level",

"print_time": "%date{\"yyyy-MM-dd'T'HH:mm:ss,SSSZ\"}",

"service": "${APP_NAME:-}",

"pid": "${PID:-}",

"thread": "%thread",

"class": "%logger",

"message": "%message",

"stack_trace": "%exception{20}"

}

</pattern>

</pattern>

</providers>

</encoder>

<!-- 正在记录的日志文件的路径及文件名 -->

<file>${LOG_FILE_PATH}/elklogs/${APP_NAME}-error.log</file>

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!--设置文件命名格式-->

<fileNamePattern>${LOG_FILE_PATH}/elklogs/${APP_NAME}-error-%d{yyyy-MM-dd}-%i.log.gz</fileNamePattern>

<!--设置日志文件大小,超过就重新生成文件,默认10M-->

<maxFileSize>${LOG_FILE_MAX_SIZE:-10MB}</maxFileSize>

<!--日志文件保留天数,默认30天-->

<maxHistory>${LOG_FILE_MAX_HISTORY:-90}</maxHistory>

</rollingPolicy>

</appender>

<!-- 接口访问日志输出到ELK文件目录下-->

<appender name="LOG_STASH_RECORD" class="ch.qos.logback.core.rolling.RollingFileAppender">

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LoggingEventCompositeJsonEncoder">

<providers>

<timestamp>

<timeZone>Asia/Shanghai</timeZone>

</timestamp>

<!--自定义日志输出格式-->

<pattern>

<pattern>

{

"project": "kkb-project",

"level": "%level",

"print_time": "%date{\"yyyy-MM-dd'T'HH:mm:ss,SSSZ\"}",

"service": "${APP_NAME:-}",

"class": "%logger",

"message": "%message"

}

</pattern>

</pattern>

</providers>

</encoder>

<!-- 正在记录的日志文件的路径及文件名 -->

<file>${LOG_FILE_PATH}/elklogs/${APP_NAME}-record.log</file>

<rollingPolicy class="ch.qos.logback.core.rolling.SizeAndTimeBasedRollingPolicy">

<!--设置文件命名格式-->

<fileNamePattern>${LOG_FILE_PATH}/elklogs/${APP_NAME}-record-%d{yyyy-MM-dd}-%i.log.gz</fileNamePattern>

<!--设置日志文件大小,超过就重新生成文件,默认10M-->

<maxFileSize>${LOG_FILE_MAX_SIZE:-10MB}</maxFileSize>

<!--日志文件保留天数,默认30天-->

<maxHistory>${LOG_FILE_MAX_HISTORY:-90}</maxHistory>

</rollingPolicy>

</appender>

<!--框架内部日志输出配置:未指定appender,则自动继承root节点中定义的appender-->

<logger name="org.slf4j" level="INFO"/>

<logger name="springfox" level="INFO"/>

<logger name="io.swagger" level="INFO"/>

<logger name="org.springframework" level="INFO"/>

<logger name="org.hibernate.validator" level="INFO"/>

<logger name="com.alibaba.nacos.client.naming" level="INFO"/>

<!--根日志记录器配置:logger输出日志配置-->

<root level="DEBUG">

<appender-ref ref="CONSOLE"/>

<appender-ref ref="FILE_DEBUG"/>

<appender-ref ref="FILE_ERROR"/>

<appender-ref ref="LOG_STASH_DEBUG"/>

<appender-ref ref="LOG_STASH_ERROR"/>

</root>

<!--接口访问日志记录器配置:-->

<logger name="com.kkb.project.common.log.WebLogAspect" level="DEBUG">

<appender-ref ref="LOG_STASH_RECORD"/>

</logger>

</configuration>2.3 ELK环境搭建

1.参考资料

2.拉取镜像

docker pull elasticsearch:7.6.2

docker pull kibana:7.6.2

docker pull logstash:7.6.23.elasticsearch部署

创建容器

docker run -p 9200:9200 -p 9300:9300 --name elasticsearch \

-e "discovery.type=single-node" \

-e "cluster.name=elasticsearch" \

-v /home/elasticsearch/plugins:/usr/share/elasticsearch/plugins \

-v /home/elasticsearch/data:/usr/share/elasticsearch/data \

-d elasticsearch:7.6.2安装分词器IKAnalyzer

docker exec -it elasticsearch /bin/bash

# 此命令需要在容器中运行

elasticsearch-plugin install https://github.com/medcl/elasticsearch-analysis-ik/releases/download/v7.6.2/elasticsearch-analysis-ik-7.6.2.zip

docker restart elasticsearch验证ElasticSearch

- Linux

curl -XGET localhost:9200- windows

# 浏览器访问

http://192.168.211.110:9200- 结果示例

{

"name" : "d72c19c6bf40",

"cluster_name" : "elasticsearch",

"cluster_uuid" : "0TuvBwtZSsWzfR-sMDzQKw",

"version" : {

"number" : "7.6.2",

"build_flavor" : "default",

"build_type" : "docker",

"build_hash" : "ef48eb35cf30adf4db14086e8aabd07ef6fb113f",

"build_date" : "2020-03-26T06:34:37.794943Z",

"build_snapshot" : false,

"lucene_version" : "8.4.0",

"minimum_wire_compatibility_version" : "6.8.0",

"minimum_index_compatibility_version" : "6.0.0-beta1"

},

"tagline" : "You Know, for Search"

}4.kibana部署

创建容器

docker run --name kibana -p 5601:5601 \

--link elasticsearch \

-e "elasticsearch.hosts=http://elasticsearch:9200" \

-d kibana:7.6.25.logstash部署

1.环境准备

- 创建目录及文件

mkdir -p /mydata/logstash/config

touch /mydata/logstash/config/logstash.conf- 配置

logstash.conf

input {

file{

path => [ "/var/logs/**/*-debug.log" ]

codec=>json

start_position => "beginning"

type => "debug"

}

file{

path => [ "/var/logs/**/*-error.log" ]

codec=>json

start_position => "beginning"

type => "error"

}

file{

path => [ "/var/logs/**/*-record.log" ]

codec=>json

start_position => "beginning"

type => "record"

}

}

filter {

date{

match => [ "print_time" , "ISO8601" ]

target => "@timestamp"

}

mutate{

remove_field => ["@version","print_time","fields","host","log","prospector","tags"]

}

if [type] == "record" {

json {

source => "message"

remove_field => ["message"]

}

}

}

output {

elasticsearch {

hosts => ["elasticsearch:9200"]

index => "kkb-%{type}-%{+YYYY.MM.dd}"

}

}2.创建容器

docker run -p 5045:5045 -p 9600:9600 --name logstash \

--link elasticsearch \

-e TZ="Asia/Shanghai" \

-v /mydata/logstash/config/logstash.conf:/usr/share/logstash/config/logstash.conf \

-v /var/logs:/var/logs \

-d logstash:7.6.2 -f /usr/share/logstash/config/logstash.conf3.系统展示